Encoding plays a crucial role in software development. It is the process of converting data from one format to another. One such encoding is Unicode Transformation Format-16 (UTF16), which is used to encode characters in computers. In this article, we will go through the concept of UTF16 Encode, its working, scenarios, key features, misconceptions, and FAQs.

What is UTF16 Encode?

UTF16 is a character encoding that uses 16 bits to represent characters. It is used to encode characters in computer systems and has become the standard for character encoding. UTF16 Encode is the process of converting characters into their corresponding UTF16 code units. In simple terms, it is a way to store and represent Unicode characters in 16-bit form.

How does UTF16 Encode work?

UTF16 Encode works by converting a Unicode character into a 16-bit value known as a code unit. It uses two code units for characters that cannot be represented in a single unit. For example, the letter “A” is represented as 0041 in UTF16, while the emoji ”🙂” is represented as D83D DE42. This process allows developers to store and transmit Unicode characters in a more compact form.

Developers can use various programming languages such as Java, Python, C++, and more to implement UTF16 Encode. Here is an example of how to encode a string in Java:

String sample = "Hello World!";

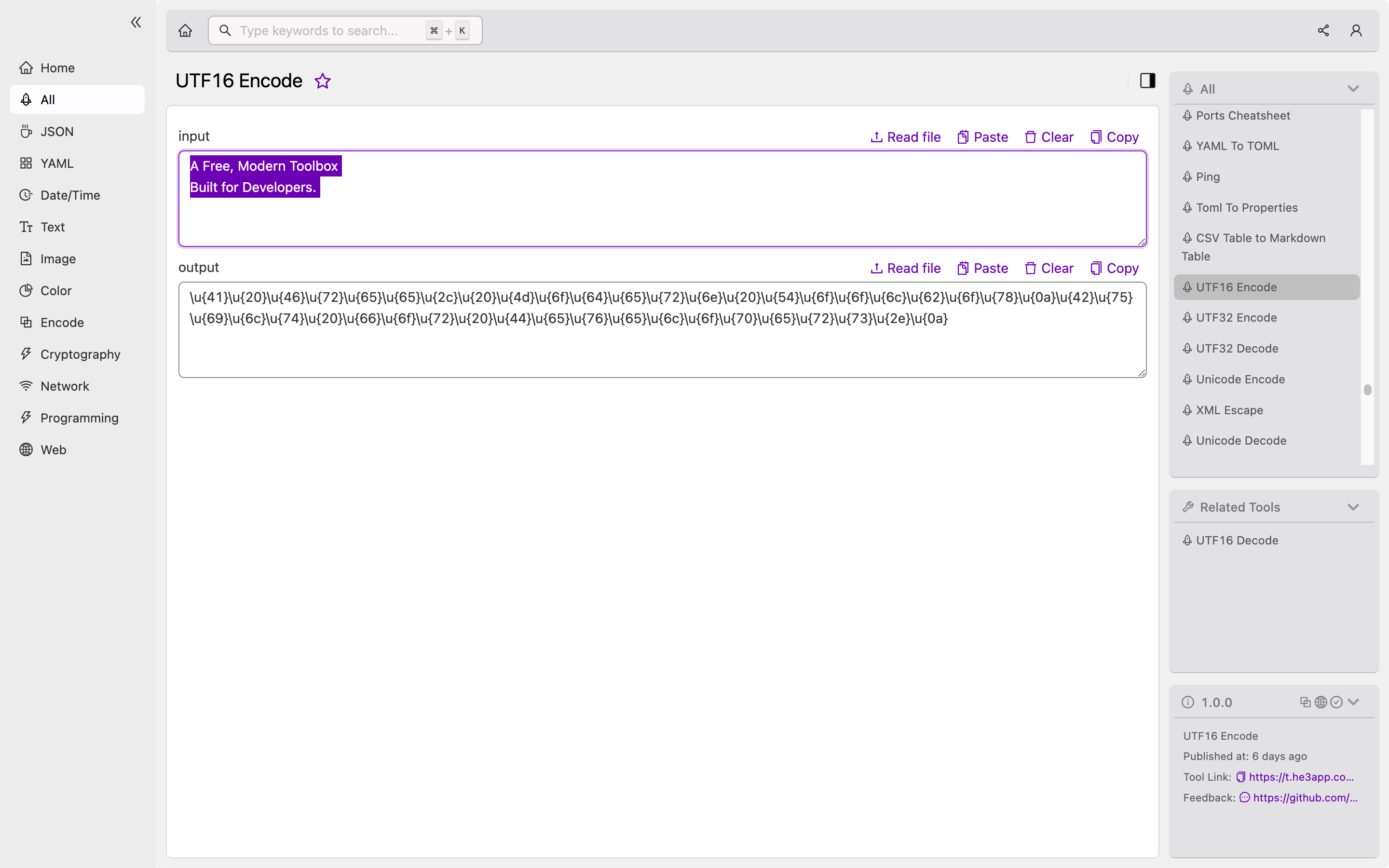

byte[] utf16Bytes = sample.getBytes("UTF-16");Or you can use UTF16 Encode tool in He3 Toolbox (https://t.he3app.com?al3i ) easily.

Scenarios for developers

There are various scenarios where UTF16 Encode comes in handy for developers. Some of these include:

- Internationalization of software

- Storing and transmitting text in computer systems, databases, and files

- Web development

- Mobile application development

Key Features

Here are some of the key features of UTF16 Encode:

| Feature | Description |

|---|---|

| Compatibility | UTF16 is compatible with ASCII and other Unicode formats |

| Efficient | It uses fewer bytes to encode characters |

| Multilingual support | It supports characters from various languages |

| Error detection | It uses a specific error detection method to ensure data is accurately transmitted |

Misconceptions and FAQs

Misconception: UTF16 and UTF8 are the same

UTF8 and UTF16 are both character encodings used to represent Unicode characters, but they differ in their bit representation. UTF8 uses a variable number of bytes to represent characters, while UTF16 uses 16 bits. UTF8 is more commonly used in web development, while UTF16 is used in software development.

FAQ 1: Can UTF16 encode all characters?

UTF16 can encode all the characters defined in the Unicode standard. However, not all programs or systems support all Unicode characters.

FAQ 2: Is UTF16 a better choice than other encodings?

Whether UTF16 is a better choice than other encodings depends on the specific needs of your software or application. However, UTF16 is widely used in software development, and its compatibility with ASCII and other Unicode formats makes it a popular choice.

Conclusion

Given the importance of encoding in software development, it is essential for developers to understand the concept and working of UTF16 Encode. This article has explained what UTF16 Encode is, how it works, scenarios where it is useful, key features, misconceptions, and FAQs. Developers can use this knowledge to implement UTF16 Encode in their projects and build better software systems.

References: